What We’re Getting Wrong About AI Equity

How liberty is impacted by AI

This newsletter serves as your bridge from the real world to the advancements of AI & other emerging technologies, specifically contextualized for education.

Dear Educators & Friends,

What’s unfolding across the United States right now has left many of us shaken. Across the country, people are watching acts of violence, community disruption, and the erosion of civil liberties unfold in real time. For many, it has triggered a deeper reckoning about what it means to be American, who is protected, who is vulnerable, and what freedoms we can no longer take for granted.

In moments like this, writing about education, let alone technology in education, can feel distant and even trivial. There are days when the work feels painfully small compared to the scale of what’s happening around us.

And yet, education is one of the few places where we still get to decide what kind of society we are actively building - through the systems we design, the values we encode, and the relationships we protect. Staying with this work is one of the ways we insist on a future that works for more than a few.

If we take that responsibility seriously, then we also have to look closely at the systems we’re building next.

Artificial intelligence is not arriving in education at a neutral moment. It’s entering a society already grappling with questions of power, protection, and who gets to make decisions on behalf of others. The choices we make about how AI is used in learning environments will shape far more than efficiency or outcomes. They will shape who has access to human care, who is subject to automated decision-making, and whose lives are most mediated by algorithms.

That’s where this newsletter issue begins.

Who Gets Humans?

When AI and equity come up in education, the conversation tends to land in the same place. People worry that students in under-resourced communities won’t have access to AI. That they’ll be left behind as wealthier districts experiment with new tools, personalized tutors, and AI-enhanced learning environments. It’s a reasonable fear because access is important.

But I’m increasingly convinced that we’re preparing for the wrong scarcity.

AI is not becoming rare, it’s becoming ambient. As models get cheaper and more deeply integrated into the digital products we already rely on, access to AI will begin to look like access to calculators or spellcheck. Uneven in quality, yes, but broadly present. I think the real question isn’t who will get AI… it’s who will get humans.

The real question isn’t who will get AI… it’s who will get humans.

I spoke about this recently in the Sparking Genius podcast, and the idea has been hard to shake. As AI becomes good enough at delivering instruction, feedback, and practice, it will increasingly be used to do exactly that, especially in systems under financial pressure.

If an AI system can reliably teach a student to read, walk them through math concepts, or provide language support on demand, it becomes very hard for underfunded systems not to lean on it. This is where the equity conversation flips. In more privileged contexts, AI is likely to function as an addition that handles routine tasks so teachers can spend more time mentoring, conferencing, and helping students make meaning of what they’re learning. In less privileged contexts, AI risks becoming the environment itself. Human teachers in these environments, become a luxury.

SAMR Reframed

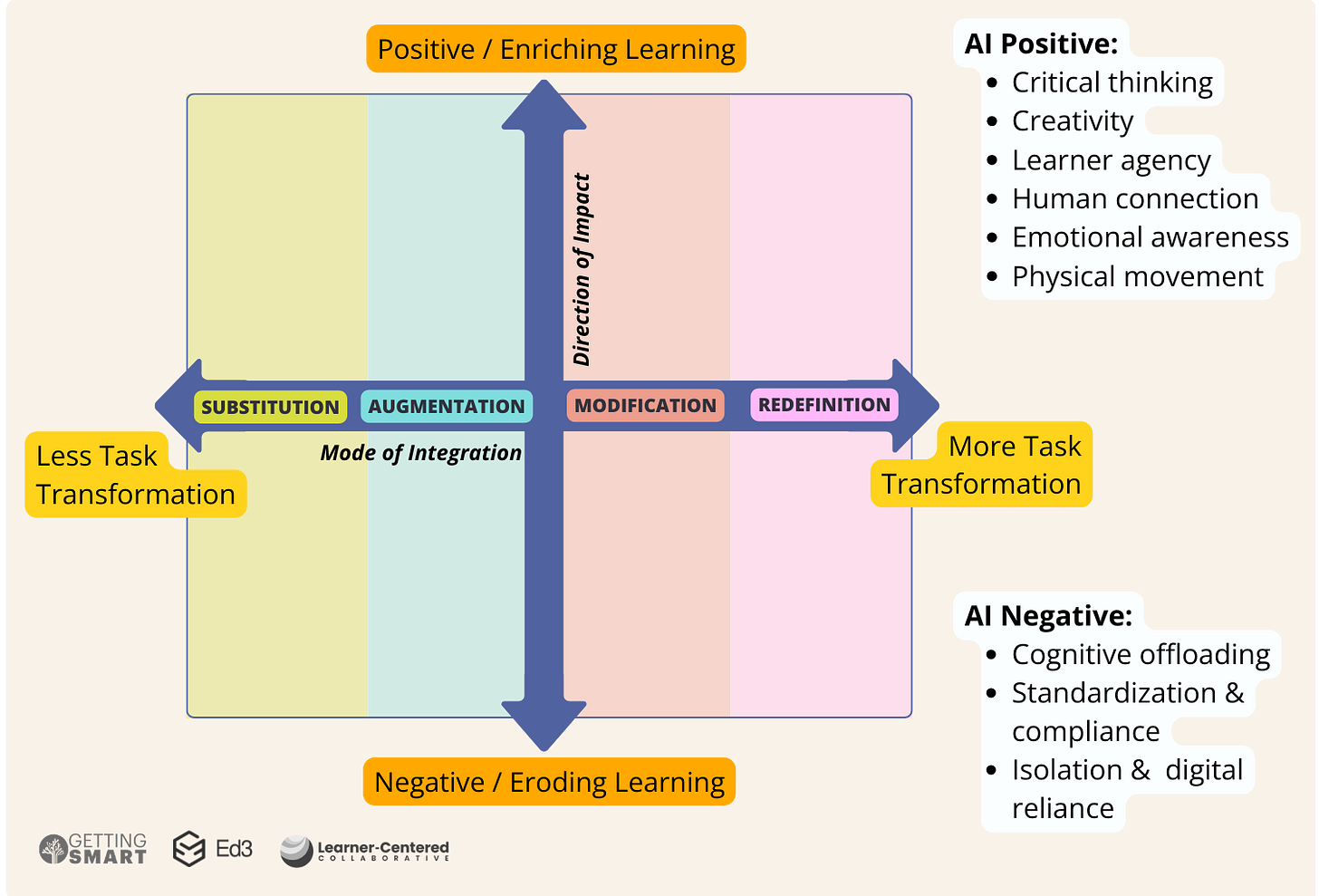

For years, educators have used the SAMR model to describe how technology changes learning tasks: Substitution, Augmentation, Modification, Redefinition. Over time, what began as a reflective framework turned into a hierarchy. Substitution came to mean basic and redefinition came to mean transformational.

AI collapses that hierarchy.

With AI, the sophistication of a task tells us very little about the quality of learning. Substituting an analog task with a digital tool can be constructive if it frees teachers for presence, feedback, and relationship. The very same substitution can be corrosive if it replaces those moments altogether. The difference is the direction of impact that AI pushes the system; whether AI expands human capacity or replaces it, whether it builds agency or outsources it. (Read more about the reframing of SAMR here.)

That distinction matters enormously for equity, because substitution is often where under-resourced systems begin. Substitution reduces load and helps people survive. Without asking what’s being replaced, survival strategies can slowly hollow out the very things students need most.

And this erosion doesn’t stop at instruction.

Culture of Algorithms

Most people will not access AI through premium products. They will access it through free ones. And free AI is not actually free - free AI is funded by data.

As students, families, and educators rely on these systems for learning, problem-solving, and decision-making, they give away enormous amounts of personal information, often without realizing it. Over time, these systems learn how users think, what they struggle with, what motivates them, and what they are most likely to believe. That knowledge shapes influence, and precisely the reason why AI Companions are successfully taking over the market.

When an AI knows you well, persuasion doesn’t look like advertising, it looks like help - a suggestion framed as advice or an idea framed as common sense. OpenAI has recently announced that ads will appear on its free models which is a logical business move but it also points toward a future where AI systems don’t just answer questions or support learning… they mediate what people see, buy, trust, and believe.

The equity risk here is not subtle. Those with fewer resources will be more exposed to data-extractive systems. Over time, this creates a world where some people grow up buffered by human relationships and institutional protection, while others navigate a reality shaped almost entirely by algorithms.

This is a civic issue.

…culture itself begins to bend around algorithms

If most people learn and make sense of the world through systems shaped by commercial incentives, culture itself begins to bend around algorithms. Over time, this makes societies easier to influence and harder to deliberate. Political ideas, narratives, and agendas can be personalized, optimized, bought, and delivered at scale, not just through mass messages, but also through individually tailored streams that are nearly impossible to audit from the outside.

This is where the missing dimension in how we evaluate technology becomes impossible to ignore. We have to start looking at AI from the lens of what it will strengthen or replace and whether it deepens human connection and agency or weakens it.

Ed3’s Portrait of a Teacher in the Age of AI (join us via the Brain Trust) project is exploring some of these ideas, demanding that human educators be uplifted by AI and at the same time, not become a luxury good - unaffordable and unevenly distributed.

AI is already raising the floor for what humans can accomplish. But humans still need to define the ceiling and ensure we protect agency, our civil liberties, and our human connections. If we get this wrong, we won’t just automate learning, we’ll automate inequality at scale.

But I remain deeply hopeful that we can still get this right.

Warmly yours,

Vriti

P.S. Will you be at TCEA? I’m honored to be the opening keynote and would love to connect. Feel free to reply here or reach out by email if you’ll be there.

I’m Vriti - Founder & CEO of Ed3 non-profit, facilitator of Portrait of a Teacher in the Age of AI, new mom, public speaker, and researcher.

Regarding the topic of the article, this piece articulates a crucial perspective on the non-neutral context of AI implementation, which is fundametal for ethical development.